On the run up to Future Sessions – The Air of Turbulence Symposium, we’re introducing you to our brilliant speakers. Today we meet Elvia Vasconcelos, a design researcher exploring the human impact of technology.

Meet Elvia Vasconcelos – a design researcher and activist who will lead a critical exploration of voice technologies at Future Sessions – The Air of Turbulence, Mon 8 July.

On the run up to Future Sessions – The Air of Turbulence Symposium, we’re introducing you to our brilliant speakers. Today we meet Elvia Vasconcelos, a design researcher exploring the human impact of technology.

Hi Elvia, could you tell us a bit about you and what you do?

I’m a design researcher and sketchnoter, which means that I am curious about people and I speak in drawings.

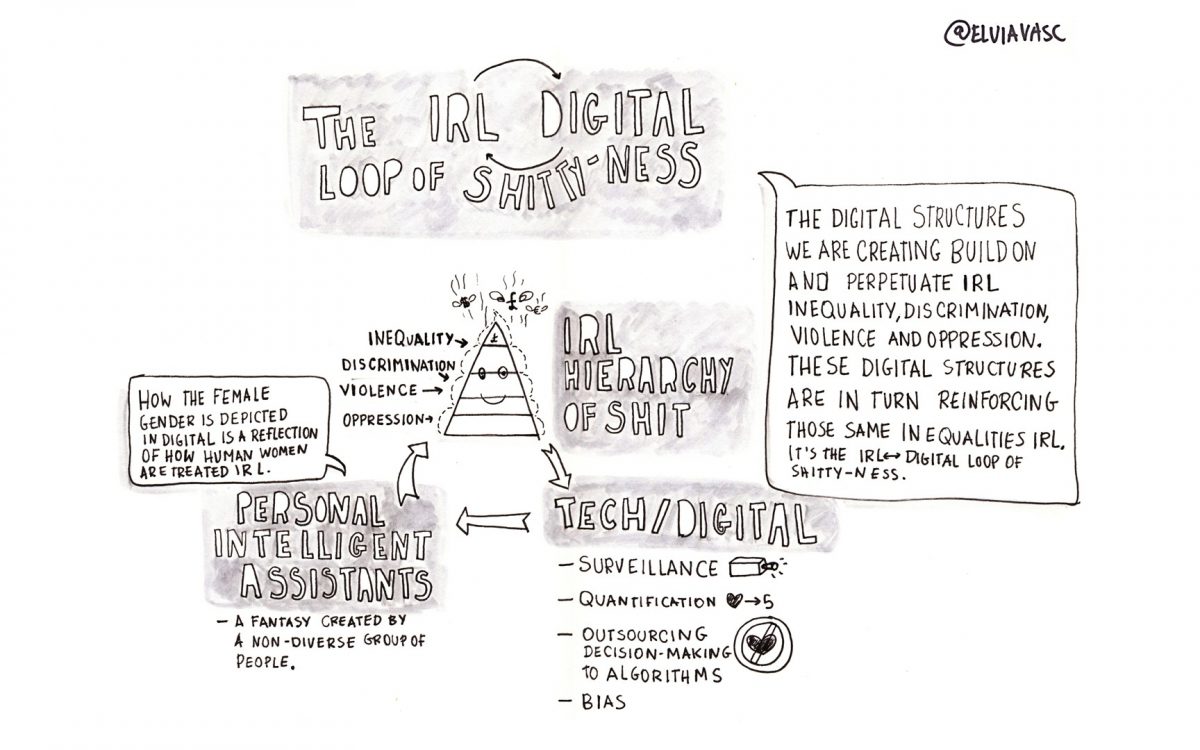

In recent years I have been focusing on how the structures of oppression that exist in real life are being mirrored, reinforced and amplified by the digital systems we create.

For the symposium participants, I am proposing a session where we collectively and critically explore the invisible power structures embodied in everyday voice technologies and create a tangible output that makes it accessible to public discourse. #memesFTW

What are the questions you’re interested in raising through your practice, and why is it important we have these conversations now?

“Because I don’t also want to live in the future of Elon Musk, the one white men have predicted for us.” Morehshin Allahyari podcast ‘Talking Digital Colonialism

The digital systems we’re creating reflect the view of the world of the non-diverse privileged group of people that create and control these systems: the ones in power, mostly white men.

What is our role as design practitioners, in relation to power and inequality? What to do with our privileged positions, involved in decision making or at least having a hand in what digital structures are created and which shape they take? How can we re-evaluate our methods to challenge tech solutionism in favour of critical questioning: what is being created, by who, for whom and what for? Who will really be benefiting from this? How and where can we expose and sit with such complexity?

What does ‘voice’ mean to you in the context of technology and digital culture?

I immediately think of Alexa.

Personal Intelligent Assistants (PIAs) have long been layered with human traits. The tech industry has largely failed to critically engage with the complexities that lie behind using female voices. To justify the female gendering of PIAs companies have been supporting themselves with market studies that conclude things like “People perceive female voices as helping us solve our problems by ourselves while they view male voices as authority figures who tell us the answers to our problems.” Jessi Hempel quoting Clifford Nass Siri and Cortana Sound Like Ladies Because of Sexism

This is BS. What studies like this fail to engage with is why people feel a preference towards a female identity (not voices) in an assistant function and whether that is something we want to reinforce or subvert.

The future is increasingly driven by algorithms… should we be concerned?

“We created poverty. Algorithms won’t make that go away” Virginia Eubanks ‘Automating Inequality’

Algorithms are not going to save our future because technology is not the solution to all our problems.

The problems we face are deep and complex. Throwing technology at problems is at best naïve and at worst catastrophic. Using algorithms for automated decision making is particularly concerning because this turns out to be just a way of shifting really complex decisions to algorithms in ways that are often invisible and unaccountable for (riffing off Virginia Eubanks work again) without properly engaging with the social, political and economical roots behind problems.

We shouldn’t just be concerned, we should be actively working towards alternative and fairer futures.

In the world of tech and digital culture right now, what do you see as the most exciting opportunities and the biggest challenges or fears/concerns?

Having worked with human-centred approaches for years, I feel an urgent need to go beyond the current methods towards spaces and processes of true participation (meaning a balanced power dynamics). Going towards designing ‘with people’ and away from ‘for people’.

To do that, I believe we need to go in the direction of bottom up strategies that combine formal and informal knowledge to allows us to collectively decide which problems we should be focusing on and the strategies to take. These strategies are what we need to mobilise humans and machines to address real problems.

This means that our role as design practitioners is about creating strategies to address systemic issues that go beyond technological systems.

You can join a participatory workshop exploring voice technologies with Elvia at Future Sessions – The Air of Turbulence Symposium on Mon 8 July. Part of our Future Sessions: Atmospheric Memory programme.